lukew.com - LukeW Finding the Role of Humans in AI Products

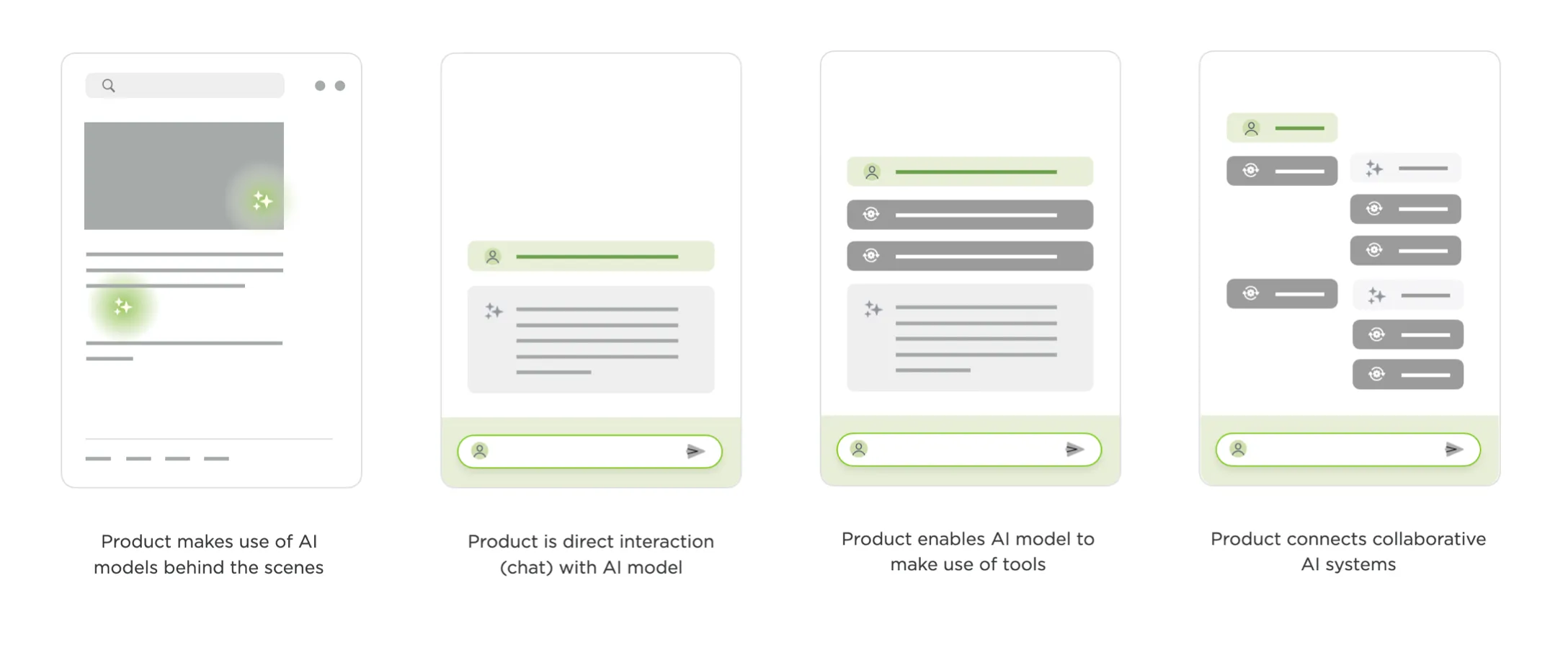

As AI products have evolved from models behind the scenes to chat interfaces to agentic systems to agents coordinating other agents, the design question has begun to shift. It used to be about how people interact with AI. Now it’s about where and how people fit in.

The clearest example of this is in software development. In Anthropic’s 2025 data, software developers made up 3% of U.S. workers but nearly 40% of all Claude conversations. A year later, their 2026 Measuring Agent Autonomy report showed software engineering accounting for roughly 50% of AI agent deployments. Whatever developers are doing with AI now, other domains are likely to follow suit.

And what developers have been doing is watching their role abstract upward at a pace that’s hard to overstate.

- First, humans wrote code. You typed, the computer did what you said.

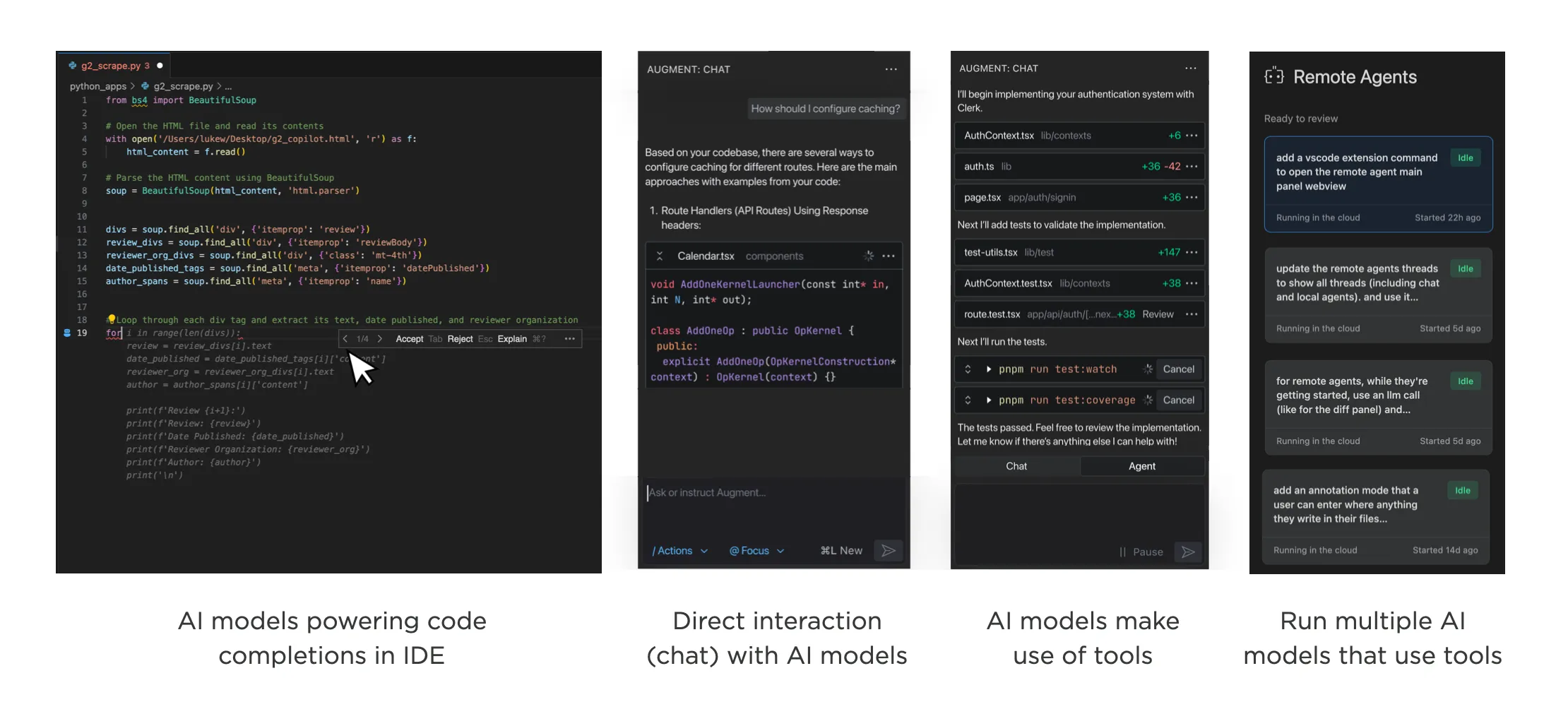

- Then machines started suggesting. GitHub Copilot’s early form was essentially AI behind the scenes, offering inline completions. You picked which suggestions to use. Still very much in the driver’s seat.

- Then humans started talking to AI directly. The chat era. You could describe what you wanted in natural language, paste in a broken function, brainstorm architecture. The model became a collaborator.

- Then agents got tools. The model doesn’t just respond with text anymore. It searches files, calls APIs, writes code, checks its own work, and decides what to do next based on the results. You’re no longer directing each step.

- Then came orchestration. A coordinator agent receives your request, builds a plan, and delegates to specialized sub-agents. You review and approve the plan, but execution fans out across multiple autonomous workers.

To make this more tangible, our developer workspace, Intent, makes use of agent orchestration where a coordinator agent analyzes what needs to happen, searches across relevant resources, and generates a plan. Once you approve that plan, the coordinator kicks off specialized agents to do the work: one handling the design system, another building out navigation, another coordinating their outputs. Your role is to review, approve, and steer.

Stack that one more level and you’ve got machines running machines running machines. At which point: where exactly does the human sit?

To use a metaphor we’re all familiar with: a manager keeps tabs on a handful of direct reports. A director manages managers. A CEO manages directors. At each layer, the person at the top trades direct understanding for leverage. They see less of the actual work and more of the summaries, status updates, and roll-ups.

But being an effective CEO is extraordinarily rare. Not just thinking you can do it, but actually doing it well. And a CEO of CEOs? The number of people who have operated at that scale is vanishingly small.

Which raises two questions. First, how far up the stack can humans actually go? Agent orchestration? Orchestration of orchestration? Where does it break down? Second, at whatever level we land on, what skills do people need to operate there?

The durable skills may turn out to be steering, delegation, and awareness: knowing what to ask for, how much autonomy to grant, and when to look under the hood. These aren’t programming skills. They’re closer to the skills of a good leader who knows when to let the team run and when to step in.

We used to design how people interact with software. Now we’re designing how much they need to.